This section explores different techniques of assessment that I have used or hope to use in teaching. These include informal formative assessments, which have no consequences for students and formal summative assessments, which are grade-based.

In the future, I hope to use standards based grading as a way to involve students in assessment. The grading system that I am used to using is not clearly related to my assessment of their learning. Student achievement in summative assessments are summed up in a single number and students are ranked by this number, with their grades based on this ranking. I do not like this system. I prefer if students are not competing against one another for top spots, and if a student's grades are only based upon their own achievement, not on the achievement of their classmates.

To more explicitly include students in assessment, students would get a list of standards that they are expected to master / learning objectives that they are expected to achieve by the end of the semester. Instead of getting numbers, on summative assessments, students are rated according on their progress towards achieving each of the relevant learning objectives. Students care about grades, but I want them to care about their progress in learning the material. Finally, although exams are used for assessment, the grade in the end doesn't provide much feedback on student understanding. I would like a better record of student progress.

Lower Order Thinking

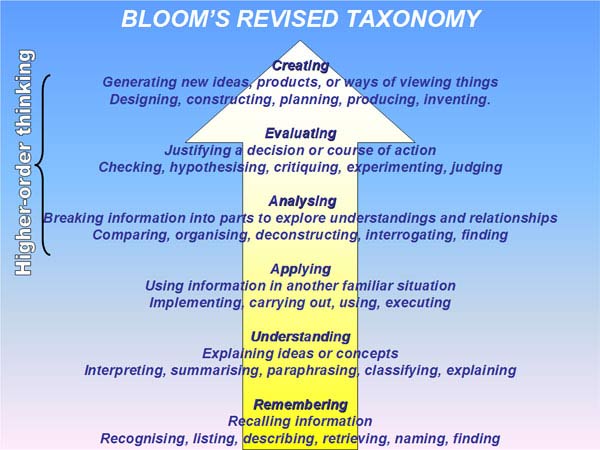

Bloom's Revised Taxonomy includes the following three types of lower order learning:

Remembering, Understanding, and Applying.

The following are examples of assessments that I use or wish to use to assess the learning of my students at these levels. I usually think of assessments in this category being almost entirely content-based, with most assessment done at the levels of Understanding and Applying.

Quick Check Discussions

I use this formative assessment technique very frequently, to check that students understand definitions, techniques, and theorems. I leave out the justification for a step, or ask students to use a definition, or to try a very straightforward problem. Students think for a minute and then discuss with one another. I either explain or a student provides the explanation after a few minutes. I use both Good Questions, and my own questions.

I like this assessment technique because talking about math and explaining your thinking is a good way of consolidating knowledge, and these breaks allow students to check if they understand the basic ideas well enough to explain them. It gives them an opportunity to ask questions, and it lets me revisit ideas that they did not understand. When used regularly, students adjust well to pairwise discussions.

Example: The function f(x) = 1/x is not a decreasing function. Draw the graph of 1/x, then turn to someone sitting near you and explain why it is not a decreasing function.

WeBWork assignments

The WeBWork homework system, which is growing in popularity, is an online homework system that allows randomized computational problems to be assigned. Using this system makes it possible to assess a student's abilities to perform relativey mechanical problems without creating a burden of grading. Moreover, such systems provide instant feedback to students about their skills, since grading is instantaneous. I have experimented with WeBWork, but not used it in the classroom. If multiple attempts at solutions are allowed, these assignements are also formative assessments, since they get feedback that they can react to from the grading of their attempts. I hope to add this to my classroom in the future.

Mastery Quizzes

Students are allowed to make multiple attempts at a proper solution. The idea is that if they don't understand something or they have a poor strategy for approaching problems, during the course of the quiz they realize and learn the better way. Doing it in a quiz makes the students persistent. Below is an example of such a quiz. This expample is testing the application level of learning.

This quiz was intended to do two things (1) make sure that students knew basic differentiation rules, (2) encourage systematic, measured problem-solving, including breaking the problem into easier pieces, parentheses etc. Students were allowed to email solutions, but were threatened with a 0 if they did not get it 100% correct. To be successful in this class, students need to have these basic skills.

This assignment was successful for those who started early. Students often were unable to do the computation all at once but had good success after coaching to do it in steps. This assignment was NOT helpful for those who submitted on the due date. They didn't realize that they didn't understand until it was too late. They got 0 points and recieved no coaching.

For next time: require students to turn in solutions after one day. Give them a week to make further attempts.

Further thought needed: Should students have to do another problem set after being coached through this set? Except for the most lost students, I didn't point out where mistakes happened. Is it fair to set the very lost students extra problems? Perhaps this could be set up as an exchange. I'm willing to find errors in exchange for the student agreeing to do another few problems.

Student Summaries

Every student is asked to present a 5 minutes summary of the most important ideas from the last class and to give an example. If students were not giving summaries, then I would be doing it anyway. When students prepare for the summary, it can be very helpful for assessing student understanding, as they move away from a recitation of what was done and try to embellish further. In adding their own material, we get a picture of their thinking. Correct summaries are fine, and sometimes add another perspective, but summaries with problems in them are often more helpful. If one student has some misconception, usually multiple students have the same problem, and the summary becomes the starting point of a discussion of the misconception. Summaries also give students a chance to practice talking about math.

In the future, to make this exercise more valuable, I will make a rubric for the summary and provide written feedback to the summarizer. I think I might also ask the person who is up-to-bat to do an assessment, so that I know that they have looked at the rubric before they are due for a summary.

I would like to make the class more critical of the summaries. A fun way to do this might be to ask students to intentionally include a mistake or a false statement in their summary (and have an explanation of why it is false). Then the class might have to try to find it.

Higher Order Thinking

Bloom's Revised Taxonomy includes the following Higher Order categories of learning:

Analysing, Evaluating, and Creating

For the following activities, I provide rubrics so that students know what evidence of learning I am looking for. The use of rubrics for assessment aligns with my teaching philosophy of providing students with meaningful feedback and clear expectations.

Especially in problem-focused courses such as introductory math courses, students are often unsure about our expectations for their work. Indeed, many believe that we care about them getting the correct answer, and rarely do they stop to think why the question was asked in the first place. In these rubrics, I hope to signal to students that I care about both content and non-content learning objectives for the course.

Analysing/Evaluating: Math Labs

The rubric below was created as the general rubric for a weekly calculus lab. The general purpose of these labs is to expose students to real-world problems for which calculus might be a useful tool in getting a solution. Hopefully this helps students translate their knowledge out of the calculus classroom to other contexts where they might want calculus. In this rubric, I aim to assess higher order skills such as reframing questions as mathematical questions and evaluating strengths and weaknesses of the approach. The levels of achievement are posed in developmental terms and the descriptions do not ask for exceeding expectations, as this is not very helpful guidance for students.

Concept Questions

I informally assess student's higher order thinking with ungraded conceptual questions.

The following example comes from a linear algebra recitation that I gave in Spring 2015. In problem 2, students are asked to generate their own example. If they have a good understanding of the definition of linear independence, it is relatively straightforward. Because this is not a mechanical question, I can be confident that a student that can do this question really understands the definition. If they do not understand the definition, the student should realize it when attempting this problem.

Creating: Counterexamples

The highest level of Bloom's Taxonomy is creation.

If a statement is false, a counterexample is an example that shows it is false. As a favorite professor once said: when writing proofs, you follow your nose. Often there is ony one natural course of action. Counterexamples must be hunted down.

Whether one considers math to be discovered or to be created, finding examples of false statements often requires significant synthesis. You must figure out what goes wrong in a proof, then create an example with exactly those problems. If a student can find a counterexample to a false statement, then they have a very good understanding of the theory.

Reflections on Standard Assessments

Assessing Reading and Talking

For introductory math classes, two of my course learning goals for students are developing skills in reading and talking about math. I believe that skills in both reading and talking are developed through practice, so it is this practice that I assess. In order to help teach students how to read math, I provide a variety of reading strategies and occasional short reading assignments to ensure that they try these strategies out. To practice talking about math, we have frequent pairwise discussions in class and every student is required to give a five minute summary of a class, with an original example or question.

In order to assess these skills, which are difficult for me to fairly assess, I tried having students do a simple self-assessment. I reviewed these assessments, and compared them with my knowledge of their preparation via reading activities and particpation in class (since some classes were less confident and rated themselves non-participatory, when they were plenty participatory). The survey I used was the following:

I feel that I participated in class: (possible evidence: pairwise discussion, asking questions, answering questions, sending questions ahead of time)

Most of the time, Some of the time, Never

I feel that I came to class prepared: (possible evidence: reading before class, trying reading strategies, doing reading activities)

Most of the time, Some of the time, Never

In the future, I would like to do self-assessments 3 or 4 times during the semester so that students can work to improve their participation and preparation. Multiple self-assessments would also allow me to provide feedback to students on the quality of their participation. In the future, I will also provide a rubric at the beginning of the year, so that students have a better idea of what is expected of them.

Homework

Homework is one of the Holy Trinity of assessments traditionally used in introductory quantitative courses. Indeed, problem sets are an important part of these courses- much learning seems to happen when students struggle with problems outside of the classroom. When choosing homework problems, I try to arrange that homework should take about two or three hours for someone who is just learning, and I try to include a wide-variety of problems, so that students get to see the ideas that they are learning applied in a few contexts. My hopes for the future are to make mechanical problems automated through WebWorks and to give 1 or 2 more involved problems nightly. I will provide feedback, record participation, and may use homeworks for assessment, but I will not give grades. One drawback of this method is that it may not scale well to large classes, and it might create excessive paperwork for me. I think that this is balanced out by the good of providing feedback to students, giving me ample opportunities for assessment, and encouraging students to be engaged in the course everyday.

Exams

As soon as we want to ask problems that are more than mechanical, I do not like time limits. It advantages quick students and disadvantages slow students, and it is inauthentic to have problems for which a solution in 10 minutes is acceptable but a solution in 15 minutes is unacceptable. Similarly, in real life, we are rarely without resources, so I want to think more about how to implement take-home exams. One of the big advantages of exams is the intense review and revisiting of material that students engage in through studying. Although take-home exams might be better assessments, I have some reservations that the synthesis at the end of the course might be wiped out by using take-home exams.

In the context of 1.5 hour exams given in large courses, I have recently made pushes towards the following:

- segmentation: problems should only test one or two skills at a time, if these skills are not tested in other places

- multiple options for difficult questions: tests are timed and stressful. Particularly in introductory classes, students are often expected to solve complicated word problems. Offering multiple options corrects for the problem of students not being able to understand problems in the timed environment, and gives students a better opportunity to show us what they know.

- test design coordinating with grading: the test designer should have a clear idea of what each problem is testing, and the grading rubrics should reflect that. For example, if a problem is intended to test whether or not a student can graph a function, they should not need to take a complicated derivative first.

Example: Exam Problem

The following three problems are exam problems from Math 1110, Calculus I class taught at Cornell in Summer and Fall 2015. These three questions have different scopes, but are about the same material. I wrote the first and was a major contributor in discussions about the second two.

The first problem was an attempt to assess the skill of graphing through analysis of first and second derivatives. This was not a successful problem. Students often failed to calculate correct derivatives, which led students to have a lot of contradictory data to try to piece together.

The second problem also hopes to assess the skill of graphing through analysis of first and second derivatives. In order to simplify the procedure, questions are only asked about the second derivative and the first derivative is provided. Unfortunately, again, students struggle greatly to find the second derivative and to perform the algebra necessary before data about first and second derivatives can be analysed.

The last version is from the final. Here the problem has been broken down, and a smaller collection of skills is being assessed - can students interpret information about a function and its first and second derivatives in order to sketch a graph? A separate question tested whether or not students could generate such information. Students performed extremely well on this question. Although we didn't see whether or not students were able to integrate the process of generating and interpreting information, previous attempts at assessing the full process in an exam setting were also unsuccessful.

Reflection on how we gather evidence of student understand has influenced my thinking about how the material should be presented in the first place. For example, it appears that the biggest stumbling block to graphing problems is generating information about the first and second derivatives. When students first learn graphing, perhaps I should remove the barrier, teach the skill of interpretation, and then add the barrier in later, once I am confident they understand how to interpret the information.